Compare commits

108 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

9ec99143d4 | ||

|

|

575a710ef8 | ||

|

|

7c16307643 | ||

|

|

e816529260 | ||

|

|

8282e59a39 | ||

|

|

a96bdb8d13 | ||

|

|

f7f1c3e871 | ||

|

|

632250083f | ||

|

|

0ebfe43133 | ||

|

|

bb367fe79e | ||

|

|

3a4d405c8e | ||

|

|

8f8adcddbb | ||

|

|

394c831b05 | ||

|

|

bb8b3a3bc3 | ||

|

|

6c5c932b98 | ||

|

|

9a151a5d4c | ||

|

|

f24595687b | ||

|

|

aa130d2d25 | ||

|

|

bccc49508e | ||

|

|

ad6db7ca97 | ||

|

|

b95d35d6fa | ||

|

|

3bf0cf5fbc | ||

|

|

dbdc0c818d | ||

|

|

e156c34e23 | ||

|

|

ee782e3794 | ||

|

|

90aa77a23a | ||

|

|

d4251c8b44 | ||

|

|

6f684e67e2 | ||

|

|

18cf202b5b | ||

|

|

54b2b71472 | ||

|

|

44ba47bafc | ||

|

|

7eb72634d8 | ||

|

|

5787d3470a | ||

|

|

1fce045ac2 | ||

|

|

794aa74782 | ||

|

|

b2e49a99a7 | ||

|

|

d208d53375 | ||

|

|

7158378eca | ||

|

|

0961d8cbe4 | ||

|

|

6ef5d11742 | ||

|

|

45e1d8370c | ||

|

|

420f995977 | ||

|

|

dbe1f91bd9 | ||

|

|

770c5fcb1f | ||

|

|

665d1ffe43 | ||

|

|

14ed221152 | ||

|

|

c41b9c1e32 | ||

|

|

17d4d68cbe | ||

|

|

b5a23fe430 | ||

|

|

2747be4a21 | ||

|

|

02da503a2f | ||

|

|

31c5d5c314 | ||

|

|

22e5b9aa44 | ||

|

|

400e8c9678 | ||

|

|

b06e744c0c | ||

|

|

ddbfe7765b | ||

|

|

c0f47fb712 | ||

|

|

7b0e8bf5f7 | ||

|

|

fa8ea58fe6 | ||

|

|

8c824e5d29 | ||

|

|

764fba74ec | ||

|

|

36c436772c | ||

|

|

897a621adc | ||

|

|

1f5802cdb4 | ||

|

|

0a57e2bab6 | ||

|

|

3ddfe94f2b | ||

|

|

c6fd5ac565 | ||

|

|

2a7cdcf12d | ||

|

|

759e546534 | ||

|

|

222337a5f0 | ||

|

|

9fb6122a9d | ||

|

|

9f0c01d62e | ||

|

|

6ed79d8fcb | ||

|

|

abb53c3219 | ||

|

|

6578d807ca | ||

|

|

e9acd32fd7 | ||

|

|

0c64165b49 | ||

|

|

6278659e55 | ||

|

|

ca2c97a98f | ||

|

|

164cc464dc | ||

|

|

faa99507ad | ||

|

|

d7a48d2829 | ||

|

|

c40936f1c4 | ||

|

|

38b26d4161 | ||

|

|

e17dffba4e | ||

|

|

ae1a91bf28 | ||

|

|

208c24b606 | ||

|

|

751450ebad | ||

|

|

e429ca3c7d | ||

|

|

9e26558666 | ||

|

|

759b30ec5c | ||

|

|

b7c195b76e | ||

|

|

7038fcf8ed | ||

|

|

54041313dc | ||

|

|

47a29f6628 | ||

|

|

839610d230 | ||

|

|

a0b324c1a8 | ||

|

|

1996807702 | ||

|

|

e91b7a85bf | ||

|

|

dddaf5c74f | ||

|

|

2a3935b221 | ||

|

|

a5becea6c9 | ||

|

|

1381b66619 | ||

|

|

eb946d948f | ||

|

|

46087ba886 | ||

|

|

f8764d1b81 | ||

|

|

b9095452da | ||

|

|

be8d23e782 |

@@ -1,10 +1,42 @@

|

|||||||

import requests

|

import requests

|

||||||

|

from configparser import RawConfigParser

|

||||||

|

import os

|

||||||

|

import re

|

||||||

|

|

||||||

def get_html(url):#网页请求核心

|

# content = open('proxy.ini').read()

|

||||||

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

# content = re.sub(r"\xfe\xff","", content)

|

||||||

getweb = requests.get(str(url),timeout=5,headers=headers)

|

# content = re.sub(r"\xff\xfe","", content)

|

||||||

getweb.encoding='utf-8'

|

# content = re.sub(r"\xef\xbb\xbf","", content)

|

||||||

try:

|

# open('BaseConfig.cfg', 'w').write(content)

|

||||||

return getweb.text

|

|

||||||

except:

|

config = RawConfigParser()

|

||||||

print("[-]Connect Failed! Please check your Proxy.")

|

if os.path.exists('proxy.ini'):

|

||||||

|

config.read('proxy.ini', encoding='UTF-8')

|

||||||

|

else:

|

||||||

|

with open("proxy.ini", "wt", encoding='UTF-8') as code:

|

||||||

|

print("[proxy]",file=code)

|

||||||

|

print("proxy=127.0.0.1:1080",file=code)

|

||||||

|

|

||||||

|

def get_html(url,cookies = None):#网页请求核心

|

||||||

|

if not str(config['proxy']['proxy']) == '':

|

||||||

|

proxies = {

|

||||||

|

"http" : "http://" + str(config['proxy']['proxy']),

|

||||||

|

"https": "https://" + str(config['proxy']['proxy'])

|

||||||

|

}

|

||||||

|

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/60.0.3100.0 Safari/537.36'}

|

||||||

|

getweb = requests.get(str(url), headers=headers, proxies=proxies,cookies=cookies)

|

||||||

|

getweb.encoding = 'utf-8'

|

||||||

|

# print(getweb.text)

|

||||||

|

try:

|

||||||

|

return getweb.text

|

||||||

|

except:

|

||||||

|

print('[-]Connected failed!:Proxy error')

|

||||||

|

else:

|

||||||

|

headers = {

|

||||||

|

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

||||||

|

getweb = requests.get(str(url), headers=headers,cookies=cookies)

|

||||||

|

getweb.encoding = 'utf-8'

|

||||||

|

try:

|

||||||

|

return getweb.text

|

||||||

|

except:

|

||||||

|

print("[-]Connect Failed.")

|

||||||

@@ -2,6 +2,21 @@ import glob

|

|||||||

import os

|

import os

|

||||||

import time

|

import time

|

||||||

import re

|

import re

|

||||||

|

import sys

|

||||||

|

from ADC_function import *

|

||||||

|

import json

|

||||||

|

|

||||||

|

version='0.10.6'

|

||||||

|

|

||||||

|

def UpdateCheck():

|

||||||

|

html2 = get_html('https://raw.githubusercontent.com/wenead99/AV_Data_Capture/master/update_check.json')

|

||||||

|

html = json.loads(str(html2))

|

||||||

|

|

||||||

|

if not version == html['version']:

|

||||||

|

print('[*] * New update '+html['version']+' *')

|

||||||

|

print('[*] * Download *')

|

||||||

|

print('[*] '+html['download'])

|

||||||

|

print('[*]=====================================')

|

||||||

|

|

||||||

def movie_lists():

|

def movie_lists():

|

||||||

#MP4

|

#MP4

|

||||||

@@ -18,8 +33,10 @@ def movie_lists():

|

|||||||

f2 = glob.glob(os.getcwd() + r"\*.mkv")

|

f2 = glob.glob(os.getcwd() + r"\*.mkv")

|

||||||

# FLV

|

# FLV

|

||||||

g2 = glob.glob(os.getcwd() + r"\*.flv")

|

g2 = glob.glob(os.getcwd() + r"\*.flv")

|

||||||

|

# TS

|

||||||

|

h2 = glob.glob(os.getcwd() + r"\*.ts")

|

||||||

|

|

||||||

total = a2+b2+c2+d2+e2+f2+g2

|

total = a2+b2+c2+d2+e2+f2+g2+h2

|

||||||

return total

|

return total

|

||||||

|

|

||||||

def lists_from_test(custom_nuber): #电影列表

|

def lists_from_test(custom_nuber): #电影列表

|

||||||

@@ -45,6 +62,10 @@ def rreplace(self, old, new, *max):

|

|||||||

return new.join(self.rsplit(old, count))

|

return new.join(self.rsplit(old, count))

|

||||||

|

|

||||||

if __name__ =='__main__':

|

if __name__ =='__main__':

|

||||||

|

print('[*]===========AV Data Capture===========')

|

||||||

|

print('[*] Version '+version)

|

||||||

|

print('[*]=====================================')

|

||||||

|

UpdateCheck()

|

||||||

os.chdir(os.getcwd())

|

os.chdir(os.getcwd())

|

||||||

for i in movie_lists(): #遍历电影列表 交给core处理

|

for i in movie_lists(): #遍历电影列表 交给core处理

|

||||||

if '_' in i:

|

if '_' in i:

|

||||||

@@ -57,4 +78,4 @@ if __name__ =='__main__':

|

|||||||

print("[!]Cleaning empty folders")

|

print("[!]Cleaning empty folders")

|

||||||

CEF('JAV_output')

|

CEF('JAV_output')

|

||||||

print("[+]All finished!!!")

|

print("[+]All finished!!!")

|

||||||

time.sleep(3)

|

input("[+][+]Press enter key exit, you can check the error messge before you exit.\n[+][+]按回车键结束,你可以在结束之前查看错误信息。")

|

||||||

159

README.md

159

README.md

@@ -1,37 +1,68 @@

|

|||||||

# 日本AV元数据抓取工具 (刮削器)

|

# AV Data Capture 日本AV元数据刮削器

|

||||||

|

# 目录

|

||||||

|

* [前言](#前言)

|

||||||

|

* [捐助二维码](#捐助二维码)

|

||||||

|

* [效果图](#效果图)

|

||||||

|

* [免责声明](#免责声明)

|

||||||

|

* [如何使用](#如何使用)

|

||||||

|

* [下载](#下载)

|

||||||

|

* [简明教程](#简要教程)

|

||||||

|

* [模块安装](#1请安装模块在cmd终端逐条输入以下命令安装)

|

||||||

|

* [配置](#2配置proxyini)

|

||||||

|

* [运行软件](#4运行-av_data_capturepyexe)

|

||||||

|

* [异常处理(重要)](#5异常处理重要)

|

||||||

|

* [导入至EMBY](#7把jav_output文件夹导入到embykodi中根据封面选片子享受手冲乐趣)

|

||||||

|

* [输出文件示例](#8输出的文件如下)

|

||||||

|

* [写在后面](#9写在后面)

|

||||||

|

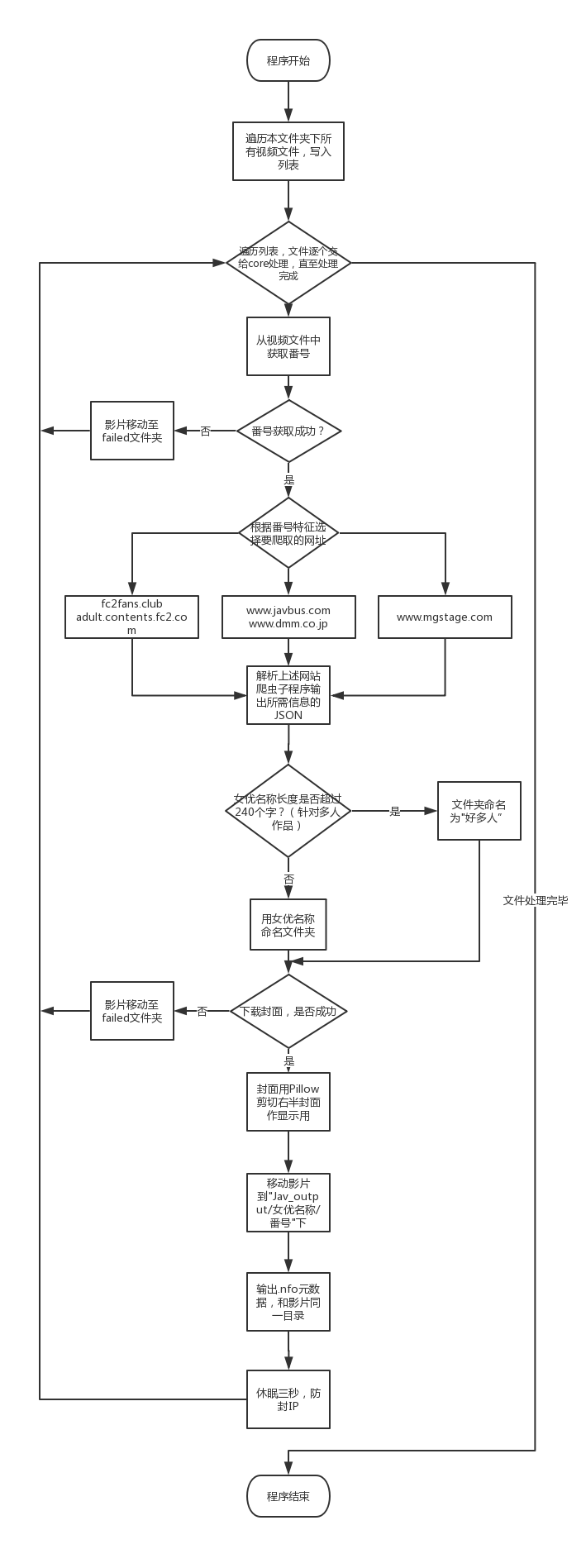

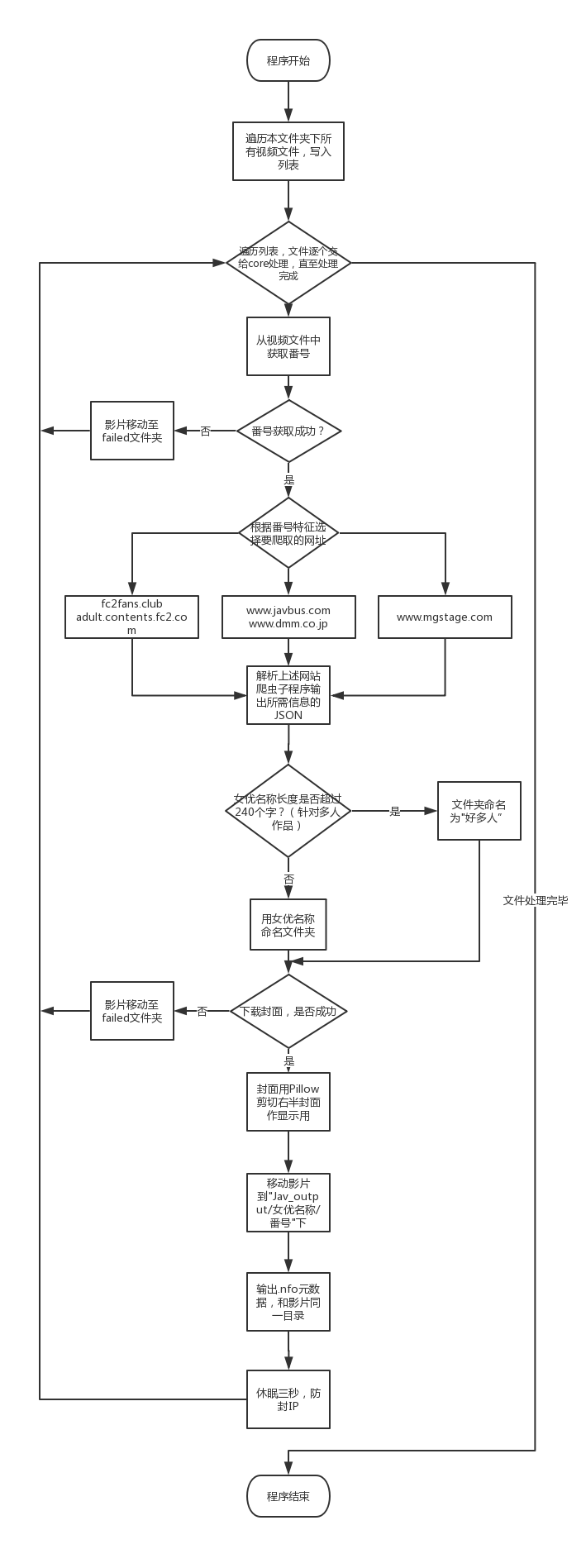

* [软件流程图](#10软件流程图)

|

||||||

|

* []()

|

||||||

|

# 前言

|

||||||

|

目前,我下的AV越来越多,也意味着AV要**集中地管理**,形成本地媒体库。现在有两款主流的AV元数据获取器,"EverAver"和"Javhelper"。前者的优点是元数据获取比较全,缺点是不能批量处理;后者优点是可以批量处理,但是元数据不够全。<br>

|

||||||

|

为此,综合上述软件特点,我写出了本软件,为了方便的管理本地AV,和更好的手冲体验。<br>

|

||||||

|

希望大家可以认真耐心地看完本文档,你的耐心换来的是完美的管理方式。<br>

|

||||||

|

本软件更新可能比较**频繁**,麻烦诸位用户**积极更新新版本**以获得**最佳体验**。

|

||||||

|

|

||||||

## 关于本软件 (~路star谢谢)

|

**可以结合pockies大神的[ 打造本地AV(毛片)媒体库 ](https://pockies.github.io/2019/03/25/everaver-emby-kodi/)看本文档**<br>

|

||||||

|

**tg官方电报群:[ 点击进群](https://t.me/AV_Data_Capture_Official)**<br>

|

||||||

|

**推荐用法: 使用该软件后,对于不能正常获取元数据的电影可以用[ Everaver ](http://everaver.blogspot.com/)来补救**<br>

|

||||||

|

暂不支持多P电影<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

**#0.5重大更新:新增对FC2,259LUXU,SIRO,300MAAN系列影片抓取支持,优化对无码视频抓取**

|

|

||||||

|

|

||||||

目前,我下的AV越来越多,也意味着AV要集中地管理,形成媒体库。现在有两款主流的AV元数据获取器,"EverAver"和"Javhelper"。前者的优点是元数据获取比较全,缺点是不能批量处理;后者优点是可以批量处理,但是元数据不够全。

|

|

||||||

|

|

||||||

为此,综合上述软件特点,我写出了本软件,为了方便的管理本地AV,和更好的手冲体验。没女朋友怎么办ʅ(‾◡◝)ʃ

|

|

||||||

|

|

||||||

**预计本周末适配DS Video,暂时只支持Kodi,EMBY**

|

|

||||||

|

|

||||||

**tg官方电报群:https://t.me/AV_Data_Capture_Official**

|

|

||||||

|

|

||||||

### **请认真阅读下面使用说明再使用** * [如何使用](#如何使用)

|

|

||||||

|

|

||||||

|

# 效果图

|

||||||

|

**由于法律因素,图片必须经马赛克处理**<br>

|

||||||

|

|

||||||

|

<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

# 捐助二维码

|

||||||

|

如果你觉得本软件好用,可以考虑捐助作者,多少钱无所谓,不强求,你的支持就是我的动力,非常感谢您的捐助

|

||||||

|

<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

## 软件流程图

|

# 免责声明

|

||||||

|

1.本软件仅供技术交流,学术交流使用<br>

|

||||||

|

2.本软件不提供任何有关淫秽色情的影视下载方式<br>

|

||||||

|

3.使用者使用该软件产生的一切法律后果由使用者承担<br>

|

||||||

|

4.该软件禁止任何商用行为<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

## 如何使用

|

# 如何使用

|

||||||

### **请认真阅读下面使用说明**

|

### 下载

|

||||||

**release的程序可脱离python环境运行,可跳过第一步(仅限windows平台)**

|

* release的程序可脱离python环境运行,可跳过 [模块安装](#1请安装模块在cmd终端逐条输入以下命令安装)<br>下载地址(**仅限Windows**):https://github.com/wenead99/AV_Data_Capture/releases

|

||||||

**下载地址(Windows):https://github.com/wenead99/AV_Data_Capture/releases**

|

* Linux,MacOS请下载源码包运行

|

||||||

1. 请安装requests,pyquery,lxml,Beautifulsoup4,pillow模块,可在CMD逐条输入以下命令安装

|

### 简要教程:<br>

|

||||||

|

**1.把软件拉到和电影的同一目录<br>2.设置ini文件的代理(路由器拥有自动代理功能的可以把proxy=后面内容去掉)<br>3.运行软件等待完成<br>4.把JAV_output导入至KODI,EMBY中。<br>详细请看以下教程**<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

## 1.请安装模块,在CMD/终端逐条输入以下命令安装

|

||||||

```python

|

```python

|

||||||

pip install requests

|

pip install requests

|

||||||

```

|

```

|

||||||

###

|

###

|

||||||

```python

|

```python

|

||||||

pip install pyquery

|

pip install pyquery

|

||||||

```

|

```

|

||||||

###

|

###

|

||||||

```python

|

```python

|

||||||

pip install lxml

|

pip install lxml

|

||||||

@@ -44,34 +75,80 @@ pip install Beautifulsoup4

|

|||||||

```python

|

```python

|

||||||

pip install pillow

|

pip install pillow

|

||||||

```

|

```

|

||||||

|

###

|

||||||

|

|

||||||

2. 你的AV在被软件管理前最好命名为番号:

|

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

## 2.配置proxy.ini

|

||||||

|

#### 1.针对网络审查国家或地区的代理设置

|

||||||

|

打开```proxy.ini```,在```[proxy]```下的```proxy```行设置本地代理地址和端口,支持Shadowsocks/R,V2RAY本地代理端口:<br>

|

||||||

|

例子:```proxy=127.0.0.1:1080```<br>

|

||||||

|

**(路由器拥有自动代理功能的可以把proxy=后面内容去掉)**<br>

|

||||||

|

**如果遇到tineout错误,可以把文件的proxy=后面的地址和端口删除,并开启vpn全局模式,或者重启电脑,vpn,网卡**<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

#### 2.(可选)设置自定义目录和影片重命名规则

|

||||||

|

**已有默认配置**<br>

|

||||||

|

##### 命名参数<br>

|

||||||

|

>title = 片名<br>

|

||||||

|

>actor = 演员<br>

|

||||||

|

>studio = 公司<br>

|

||||||

|

>director = 导演<br>

|

||||||

|

>release = 发售日<br>

|

||||||

|

>year = 发行年份<br>

|

||||||

|

>number = 番号<br>

|

||||||

|

>cover = 封面链接<br>

|

||||||

|

>tag = 类型<br>

|

||||||

|

>outline = 简介<br>

|

||||||

|

>runtime = 时长<br>

|

||||||

|

##### **例子**:<br>

|

||||||

|

>目录结构规则:location_rule='JAV_output/'+actor+'/'+number **不推荐修改目录结构规则,抓取数据时新建文件夹容易出错**<br>

|

||||||

|

>影片命名规则:naming_rule='['+number+']-'+title<br> **在EMBY,KODI等本地媒体库显示的标题**

|

||||||

|

[回到目录](#目录)

|

||||||

|

## 3.把软件拷贝和AV的统一目录下

|

||||||

|

## 4.运行 ```AV_Data_capture.py/.exe```

|

||||||

|

你也可以把单个影片拖动到core程序<br>

|

||||||

|

<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

## 5.异常处理(重要)

|

||||||

|

### 关于连接拒绝的错误

|

||||||

|

请设置好[代理](#1针对网络审查国家或地区的代理设置)<br>

|

||||||

|

|

||||||

|

|

||||||

|

[回到目录](#目录)

|

||||||

|

### 关于番号提取失败或者异常

|

||||||

|

**目前可以提取元素的影片:JAVBUS上有元数据的电影,素人系列:300Maan,259luxu,siro等,FC2系列**<br>

|

||||||

|

>下一张图片来自Pockies的blog:https://pockies.github.io/2019/03/25/everaver-emby-kodi/ 原作者已授权<br>

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

目前作者已经完善了番号提取机制,功能较为强大,可提取上述文件名的的番号,如果出现提取失败或者异常的情况,请用以下规则命名<br>

|

||||||

|

**妈蛋不要喂软件那么多野鸡片子,不让软件好好活了,操**

|

||||||

```

|

```

|

||||||

COSQ-004.mp4

|

COSQ-004.mp4

|

||||||

```

|

```

|

||||||

或者

|

|

||||||

```

|

|

||||||

COSQ_004.mp4

|

|

||||||

```

|

|

||||||

文件名中间要有下划线或者减号"_","-",没有多余的内容只有番号为最佳,可以让软件更好获取元数据

|

文件名中间要有下划线或者减号"_","-",没有多余的内容只有番号为最佳,可以让软件更好获取元数据

|

||||||

对于多影片重命名,可以用ReNamer来批量重命名

|

对于多影片重命名,可以用[ReNamer](http://www.den4b.com/products/renamer)来批量重命名<br>

|

||||||

软件官网:http://www.den4b.com/products/renamer

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

3. 把软件拷贝到AV的所在目录下,运行程序(中国大陆用户必须挂VPN,Shsadowsocks开全局代理)

|

|

||||||

4. 运行AV_Data_capture.py

|

|

||||||

5. **你也可以把单个影片拖动到core程序**

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

6. 软件会自动把元数据获取成功的电影移动到JAV_output文件夹中,根据女优分类,失败的电影移动到failed文件夹中。

|

|

||||||

|

|

||||||

7. 把JAV_output文件夹导入到EMBY,KODI中,根据封面选片子,享受手冲乐趣

|

|

||||||

|

|

||||||

|

## 6.软件会自动把元数据获取成功的电影移动到JAV_output文件夹中,根据女优分类,失败的电影移动到failed文件夹中。

|

||||||

|

## 7.把JAV_output文件夹导入到EMBY,KODI中,根据封面选片子,享受手冲乐趣

|

||||||

|

cookies大神的EMBY教程:[链接](https://pockies.github.io/2019/03/25/everaver-emby-kodi/#%E5%AE%89%E8%A3%85emby%E5%B9%B6%E6%B7%BB%E5%8A%A0%E5%AA%92%E4%BD%93%E5%BA%93)<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

## 8.输出的文件如下

|

||||||

|

|

||||||

|

|

||||||

|

<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

## 9.写在后面

|

||||||

|

怎么样,看着自己的AV被这样完美地管理,是不是感觉成就感爆棚呢?<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

## 10.软件流程图

|

||||||

|

<br>

|

||||||

|

[回到目录](#目录)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|||||||

344

core.py

344

core.py

@@ -8,6 +8,8 @@ import javbus

|

|||||||

import json

|

import json

|

||||||

import fc2fans_club

|

import fc2fans_club

|

||||||

import siro

|

import siro

|

||||||

|

from ADC_function import *

|

||||||

|

from configparser import ConfigParser

|

||||||

|

|

||||||

#初始化全局变量

|

#初始化全局变量

|

||||||

title=''

|

title=''

|

||||||

@@ -16,38 +18,205 @@ year=''

|

|||||||

outline=''

|

outline=''

|

||||||

runtime=''

|

runtime=''

|

||||||

director=''

|

director=''

|

||||||

actor=[]

|

actor_list=[]

|

||||||

|

actor=''

|

||||||

release=''

|

release=''

|

||||||

number=''

|

number=''

|

||||||

cover=''

|

cover=''

|

||||||

imagecut=''

|

imagecut=''

|

||||||

tag=[]

|

tag=[]

|

||||||

|

naming_rule =''#eval(config['Name_Rule']['naming_rule'])

|

||||||

|

location_rule=''#eval(config['Name_Rule']['location_rule'])

|

||||||

|

#=====================本地文件处理===========================

|

||||||

|

def argparse_get_file():

|

||||||

|

import argparse

|

||||||

|

parser = argparse.ArgumentParser()

|

||||||

|

parser.add_argument("file", help="Write the file path on here")

|

||||||

|

args = parser.parse_args()

|

||||||

|

return args.file

|

||||||

|

def CreatFailedFolder():

|

||||||

|

if not os.path.exists('failed/'): # 新建failed文件夹

|

||||||

|

try:

|

||||||

|

os.makedirs('failed/')

|

||||||

|

except:

|

||||||

|

print("[-]failed!can not be make folder 'failed'\n[-](Please run as Administrator)")

|

||||||

|

os._exit(0)

|

||||||

|

def getNumberFromFilename(filepath):

|

||||||

|

global title

|

||||||

|

global studio

|

||||||

|

global year

|

||||||

|

global outline

|

||||||

|

global runtime

|

||||||

|

global director

|

||||||

|

global actor_list

|

||||||

|

global actor

|

||||||

|

global release

|

||||||

|

global number

|

||||||

|

global cover

|

||||||

|

global imagecut

|

||||||

|

global tag

|

||||||

|

global image_main

|

||||||

|

|

||||||

|

global naming_rule

|

||||||

|

global location_rule

|

||||||

|

|

||||||

|

#================================================获取文件番号================================================

|

||||||

|

try: #试图提取番号

|

||||||

|

# ====番号获取主程序====

|

||||||

|

try: # 普通提取番号 主要处理包含减号-的番号

|

||||||

|

filepath.strip('22-sht.me').strip('-HD').strip('-hd')

|

||||||

|

filename = str(re.sub("\[\d{4}-\d{1,2}-\d{1,2}\] - ", "", filepath)) # 去除文件名中文件名

|

||||||

|

file_number = re.search('\w+-\d+', filename).group()

|

||||||

|

except: # 提取不含减号-的番号

|

||||||

|

try: # 提取东京热番号格式 n1087

|

||||||

|

filename1 = str(re.sub("h26\d", "", filepath)).strip('Tokyo-hot').strip('tokyo-hot')

|

||||||

|

filename0 = str(re.sub(".*?\.com-\d+", "", filename1)).strip('_')

|

||||||

|

file_number = str(re.search('n\d{4}', filename0).group(0))

|

||||||

|

except: # 提取无减号番号

|

||||||

|

filename1 = str(re.sub("h26\d", "", filepath)) # 去除h264/265

|

||||||

|

filename0 = str(re.sub(".*?\.com-\d+", "", filename1))

|

||||||

|

file_number2 = str(re.match('\w+', filename0).group())

|

||||||

|

file_number = str(file_number2.replace(re.match("^[A-Za-z]+", file_number2).group(),re.match("^[A-Za-z]+", file_number2).group() + '-'))

|

||||||

|

#if not re.search('\w-', file_number).group() == 'None':

|

||||||

|

#file_number = re.search('\w+-\w+', filename).group()

|

||||||

|

#上面是插入减号-到番号中

|

||||||

|

print("[!]Making Data for [" + filename + "],the number is [" + file_number + "]")

|

||||||

|

# ====番号获取主程序=结束===

|

||||||

|

except Exception as e: #番号提取异常

|

||||||

|

print('[-]'+str(os.path.basename(filepath))+' Cannot catch the number :')

|

||||||

|

print('[-]' + str(os.path.basename(filepath)) + ' :', e)

|

||||||

|

print('[-]Move ' + os.path.basename(filepath) + ' to failed folder')

|

||||||

|

shutil.move(filepath, str(os.getcwd()) + '/' + 'failed/')

|

||||||

|

os._exit(0)

|

||||||

|

except IOError as e2:

|

||||||

|

print('[-]' + str(os.path.basename(filepath)) + ' Cannot catch the number :')

|

||||||

|

print('[-]' + str(os.path.basename(filepath)) + ' :',e2)

|

||||||

|

print('[-]Move ' + os.path.basename(filepath) + ' to failed folder')

|

||||||

|

shutil.move(filepath, str(os.getcwd()) + '/' + 'failed/')

|

||||||

|

os._exit(0)

|

||||||

|

try:

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

# ================================================网站规则添加开始================================================

|

||||||

|

|

||||||

|

try: #添加 需要 正则表达式的规则

|

||||||

|

#=======================javbus.py=======================

|

||||||

|

if re.search('^\d{5,}', file_number).group() in filename:

|

||||||

|

json_data = json.loads(javbus.main_uncensored(file_number))

|

||||||

|

except: #添加 无需 正则表达式的规则

|

||||||

|

# ====================fc2fans_club.py===================

|

||||||

|

if 'fc2' in filename:

|

||||||

|

json_data = json.loads(fc2fans_club.main(file_number.strip('fc2_').strip('fc2-').strip('ppv-').strip('PPV-')))

|

||||||

|

elif 'FC2' in filename:

|

||||||

|

json_data = json.loads(fc2fans_club.main(file_number.strip('FC2_').strip('FC2-').strip('ppv-').strip('PPV-')))

|

||||||

|

#print(file_number.strip('FC2_').strip('FC2-').strip('ppv-').strip('PPV-'))

|

||||||

|

#=======================javbus.py=======================

|

||||||

|

else:

|

||||||

|

json_data = json.loads(javbus.main(file_number))

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

#================================================网站规则添加结束================================================

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

title = json_data['title']

|

||||||

|

studio = json_data['studio']

|

||||||

|

year = json_data['year']

|

||||||

|

outline = json_data['outline']

|

||||||

|

runtime = json_data['runtime']

|

||||||

|

director = json_data['director']

|

||||||

|

actor_list = str(json_data['actor']).strip("[ ]").replace("'",'').replace(" ",'').split(',') #字符串转列表

|

||||||

|

release = json_data['release']

|

||||||

|

number = json_data['number']

|

||||||

|

cover = json_data['cover']

|

||||||

|

imagecut = json_data['imagecut']

|

||||||

|

tag = str(json_data['tag']).strip("[ ]").replace("'",'').replace(" ",'').split(',') #字符串转列表

|

||||||

|

actor = str(actor_list).strip("[ ]").replace("'",'').replace(" ",'')

|

||||||

|

|

||||||

|

#====================处理异常字符====================== #\/:*?"<>|

|

||||||

|

#if "\\" in title or "/" in title or ":" in title or "*" in title or "?" in title or '"' in title or '<' in title or ">" in title or "|" in title or len(title) > 200:

|

||||||

|

# title = title.

|

||||||

|

|

||||||

|

naming_rule = eval(config['Name_Rule']['naming_rule'])

|

||||||

|

location_rule =eval(config['Name_Rule']['location_rule'])

|

||||||

|

except IOError as e:

|

||||||

|

print('[-]'+str(e))

|

||||||

|

print('[-]Move ' + filename + ' to failed folder')

|

||||||

|

shutil.move(filepath, str(os.getcwd())+'/'+'failed/')

|

||||||

|

os._exit(0)

|

||||||

|

|

||||||

|

except Exception as e:

|

||||||

|

print('[-]'+str(e))

|

||||||

|

print('[-]Move ' + filename + ' to failed folder')

|

||||||

|

shutil.move(filepath, str(os.getcwd())+'/'+'failed/')

|

||||||

|

os._exit(0)

|

||||||

|

path = '' #设置path为全局变量,后面移动文件要用

|

||||||

|

def creatFolder():

|

||||||

|

global path

|

||||||

|

if len(actor) > 240: #新建成功输出文件夹

|

||||||

|

path = location_rule.replace("'actor'","'超多人'",3).replace("actor","'超多人'",3) #path为影片+元数据所在目录

|

||||||

|

#print(path)

|

||||||

|

else:

|

||||||

|

path = location_rule

|

||||||

|

#print(path)

|

||||||

|

if not os.path.exists(path):

|

||||||

|

os.makedirs(path)

|

||||||

#=====================资源下载部分===========================

|

#=====================资源下载部分===========================

|

||||||

def DownloadFileWithFilename(url,filename,path): #path = examle:photo , video.in the Project Folder!

|

def DownloadFileWithFilename(url,filename,path): #path = examle:photo , video.in the Project Folder!

|

||||||

import requests

|

config = ConfigParser()

|

||||||

try:

|

config.read('proxy.ini', encoding='UTF-8')

|

||||||

if not os.path.exists(path):

|

proxy = str(config['proxy']['proxy'])

|

||||||

os.makedirs(path)

|

if not str(config['proxy']['proxy']) == '':

|

||||||

r = requests.get(url)

|

try:

|

||||||

with open(str(path) + "/"+str(filename), "wb") as code:

|

if not os.path.exists(path):

|

||||||

code.write(r.content)

|

os.makedirs(path)

|

||||||

except IOError as e:

|

headers = {

|

||||||

print("[-]Movie not found in All website!")

|

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

||||||

#print("[*]=====================================")

|

r = requests.get(url, headers=headers,proxies={"http": "http://" + str(proxy), "https": "https://" + str(proxy)})

|

||||||

return "failed"

|

with open(str(path) + "/" + filename, "wb") as code:

|

||||||

except Exception as e1:

|

code.write(r.content)

|

||||||

print(e1)

|

# print(bytes(r),file=code)

|

||||||

print("[-]Download Failed2!")

|

except IOError as e:

|

||||||

time.sleep(3)

|

print("[-]Movie not found in All website!")

|

||||||

os._exit(0)

|

print("[-]" + filename, e)

|

||||||

def PrintFiles(path):

|

# print("[*]=====================================")

|

||||||

|

return "failed"

|

||||||

|

except Exception as e1:

|

||||||

|

print(e1)

|

||||||

|

print("[-]Download Failed2!")

|

||||||

|

time.sleep(3)

|

||||||

|

os._exit(0)

|

||||||

|

else:

|

||||||

|

try:

|

||||||

|

if not os.path.exists(path):

|

||||||

|

os.makedirs(path)

|

||||||

|

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

||||||

|

r = requests.get(url, headers=headers)

|

||||||

|

with open(str(path) + "/" + filename, "wb") as code:

|

||||||

|

code.write(r.content)

|

||||||

|

# print(bytes(r),file=code)

|

||||||

|

except IOError as e:

|

||||||

|

print("[-]Movie not found in All website!")

|

||||||

|

print("[-]" + filename, e)

|

||||||

|

# print("[*]=====================================")

|

||||||

|

return "failed"

|

||||||

|

except Exception as e1:

|

||||||

|

print(e1)

|

||||||

|

print("[-]Download Failed2!")

|

||||||

|

time.sleep(3)

|

||||||

|

os._exit(0)

|

||||||

|

def PrintFiles(path,naming_rule):

|

||||||

|

global title

|

||||||

try:

|

try:

|

||||||

if not os.path.exists(path):

|

if not os.path.exists(path):

|

||||||

os.makedirs(path)

|

os.makedirs(path)

|

||||||

with open(path + "/" + number + ".nfo", "wt", encoding='UTF-8') as code:

|

with open(path + "/" + number + ".nfo", "wt", encoding='UTF-8') as code:

|

||||||

print("<movie>", file=code)

|

print("<movie>", file=code)

|

||||||

print(" <title>" + title + "</title>", file=code)

|

print(" <title>" + naming_rule + "</title>", file=code)

|

||||||

print(" <set>", file=code)

|

print(" <set>", file=code)

|

||||||

print(" </set>", file=code)

|

print(" </set>", file=code)

|

||||||

print(" <studio>" + studio + "+</studio>", file=code)

|

print(" <studio>" + studio + "+</studio>", file=code)

|

||||||

@@ -56,11 +225,11 @@ def PrintFiles(path):

|

|||||||

print(" <plot>"+outline+"</plot>", file=code)

|

print(" <plot>"+outline+"</plot>", file=code)

|

||||||

print(" <runtime>"+str(runtime).replace(" ","")+"</runtime>", file=code)

|

print(" <runtime>"+str(runtime).replace(" ","")+"</runtime>", file=code)

|

||||||

print(" <director>" + director + "</director>", file=code)

|

print(" <director>" + director + "</director>", file=code)

|

||||||

print(" <poster>" + number + ".png</poster>", file=code)

|

print(" <poster>" + naming_rule + ".png</poster>", file=code)

|

||||||

print(" <thumb>" + number + ".png</thumb>", file=code)

|

print(" <thumb>" + naming_rule + ".png</thumb>", file=code)

|

||||||

print(" <fanart>"+number + '.jpg'+"</fanart>", file=code)

|

print(" <fanart>"+naming_rule + '.jpg'+"</fanart>", file=code)

|

||||||

try:

|

try:

|

||||||

for u in actor:

|

for u in actor_list:

|

||||||

print(" <actor>", file=code)

|

print(" <actor>", file=code)

|

||||||

print(" <name>" + u + "</name>", file=code)

|

print(" <name>" + u + "</name>", file=code)

|

||||||

print(" </actor>", file=code)

|

print(" </actor>", file=code)

|

||||||

@@ -73,133 +242,34 @@ def PrintFiles(path):

|

|||||||

for i in tag:

|

for i in tag:

|

||||||

print(" <tag>" + i + "</tag>", file=code)

|

print(" <tag>" + i + "</tag>", file=code)

|

||||||

except:

|

except:

|

||||||

aaaa=''

|

aaaaa=''

|

||||||

|

try:

|

||||||

|

for i in tag:

|

||||||

|

print(" <genre>" + i + "</genre>", file=code)

|

||||||

|

except:

|

||||||

|

aaaaaaaa=''

|

||||||

print(" <num>" + number + "</num>", file=code)

|

print(" <num>" + number + "</num>", file=code)

|

||||||

print(" <release>" + release + "</release>", file=code)

|

print(" <release>" + release + "</release>", file=code)

|

||||||

print(" <cover>"+cover+"</cover>", file=code)

|

print(" <cover>"+cover+"</cover>", file=code)

|

||||||

print(" <website>" + "https://www.javbus.com/"+number + "</website>", file=code)

|

print(" <website>" + "https://www.javbus.com/"+number + "</website>", file=code)

|

||||||

print("</movie>", file=code)

|

print("</movie>", file=code)

|

||||||

print("[+]Writeed! "+path + "/" + number + ".nfo")

|

print("[+]Writeed! "+path + "/" + number + ".nfo")

|

||||||

except IOError as e:

|

except IOError as e:

|

||||||

print("[-]Write Failed!")

|

print("[-]Write Failed!")

|

||||||

print(e)

|

print(e)

|

||||||

except Exception as e1:

|

except Exception as e1:

|

||||||

print(e1)

|

print(e1)

|

||||||

print("[-]Write Failed!")

|

print("[-]Write Failed!")

|

||||||

|

|

||||||

#=====================本地文件处理===========================

|

|

||||||

def argparse_get_file():

|

|

||||||

import argparse

|

|

||||||

parser = argparse.ArgumentParser()

|

|

||||||

parser.add_argument("file", help="Write the file path on here")

|

|

||||||

args = parser.parse_args()

|

|

||||||

return args.file

|

|

||||||

def getNumberFromFilename(filepath):

|

|

||||||

global title

|

|

||||||

global studio

|

|

||||||

global year

|

|

||||||

global outline

|

|

||||||

global runtime

|

|

||||||

global director

|

|

||||||

global actor

|

|

||||||

global release

|

|

||||||

global number

|

|

||||||

global cover

|

|

||||||

global imagecut

|

|

||||||

global tag

|

|

||||||

|

|

||||||

filename = str(re.sub("\[\d{4}-\d{1,2}-\d{1,2}\] - ", "", os.path.basename(filepath)))

|

|

||||||

print("[!]Making Data for ["+filename+"]")

|

|

||||||

file_number = str(re.search('\w+-\w+', filename).group())

|

|

||||||

#print(a)

|

|

||||||

try:

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

#================================================网站规则添加开始================================================

|

|

||||||

|

|

||||||

|

|

||||||

try: #添加 需要 正则表达式的规则

|

|

||||||

if re.search('^\d{5,}', file_number).group() in filename:

|

|

||||||

json_data = json.loads(javbus.main_uncensored(file_number))

|

|

||||||

except: #添加 无需 正则表达式的规则

|

|

||||||

if 'fc2' in filename:

|

|

||||||

json_data = json.loads(fc2fans_club.main(file_number))

|

|

||||||

elif 'FC2' in filename:

|

|

||||||

json_data = json.loads(fc2fans_club.main(file_number))

|

|

||||||

elif 'siro' in filename:

|

|

||||||

json_data = json.loads(siro.main(file_number))

|

|

||||||

elif 'SIRO' in filename:

|

|

||||||

json_data = json.loads(siro.main(file_number))

|

|

||||||

elif '259luxu' in filename:

|

|

||||||

json_data = json.loads(siro.main(file_number))

|

|

||||||

elif '259LUXU' in filename:

|

|

||||||

json_data = json.loads(siro.main(file_number))

|

|

||||||

else:

|

|

||||||

json_data = json.loads(javbus.main(file_number))

|

|

||||||

|

|

||||||

|

|

||||||

#================================================网站规则添加结束================================================

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

title = json_data['title']

|

|

||||||

studio = json_data['studio']

|

|

||||||

year = json_data['year']

|

|

||||||

outline = json_data['outline']

|

|

||||||

runtime = json_data['runtime']

|

|

||||||

director = json_data['director']

|

|

||||||

actor = str(json_data['actor']).strip("[ ]").replace("'",'').replace(" ",'').split(',')

|

|

||||||

release = json_data['release']

|

|

||||||

number = json_data['number']

|

|

||||||

cover = json_data['cover']

|

|

||||||

imagecut = json_data['imagecut']

|

|

||||||

tag = str(json_data['tag']).strip("[ ]").replace("'",'').replace(" ",'').split(',')

|

|

||||||

except:

|

|

||||||

print('[-]File '+filename+'`s number can not be caught')

|

|

||||||

print('[-]Move ' + filename + ' to failed folder')

|

|

||||||

if not os.path.exists('failed/'): # 新建failed文件夹

|

|

||||||

os.makedirs('failed/')

|

|

||||||

if not os.path.exists('failed/'):

|

|

||||||

print("[-]failed!Dirs can not be make (Please run as Administrator)")

|

|

||||||

time.sleep(3)

|

|

||||||

os._exit(0)

|

|

||||||

shutil.move(filepath, str(os.getcwd())+'/'+'failed/')

|

|

||||||

os._exit(0)

|

|

||||||

|

|

||||||

path = '' #设置path为全局变量,后面移动文件要用

|

|

||||||

|

|

||||||

def creatFolder():

|

|

||||||

actor2 = str(actor).strip("[ ]").replace("'",'').replace(" ",'')

|

|

||||||

global path

|

|

||||||

if not os.path.exists('failed/'): #新建failed文件夹

|

|

||||||

os.makedirs('failed/')

|

|

||||||

if not os.path.exists('failed/'):

|

|

||||||

print("[-]failed!Dirs can not be make (Please run as Administrator)")

|

|

||||||

os._exit(0)

|

|

||||||

if len(actor2) > 240: #新建成功输出文件夹

|

|

||||||

path = 'JAV_output' + '/' + '超多人' + '/' + number #path为影片+元数据所在目录

|

|

||||||

else:

|

|

||||||

path = 'JAV_output' + '/' + str(actor2) + '/' + str(number)

|

|

||||||

if not os.path.exists(path):

|

|

||||||

os.makedirs(path)

|

|

||||||

path = str(os.getcwd())+'/'+path

|

|

||||||

|

|

||||||

def imageDownload(filepath): #封面是否下载成功,否则移动到failed

|

def imageDownload(filepath): #封面是否下载成功,否则移动到failed

|

||||||

if DownloadFileWithFilename(cover,str(number) + '.jpg', path) == 'failed':

|

if DownloadFileWithFilename(cover,'Backdrop.jpg', path) == 'failed':

|

||||||

shutil.move(filepath, 'failed/')

|

shutil.move(filepath, 'failed/')

|

||||||

os._exit(0)

|

os._exit(0)

|

||||||

DownloadFileWithFilename(cover, number + '.jpg', path)

|

DownloadFileWithFilename(cover, 'Backdrop.jpg', path)

|

||||||

print('[+]Image Downloaded!', path +'/'+number+'.jpg')

|

print('[+]Image Downloaded!', path +'/'+'Backdrop.jpg')

|

||||||

def cutImage():

|

def cutImage():

|

||||||

if imagecut == 1:

|

if imagecut == 1:

|

||||||

try:

|

try:

|

||||||

img = Image.open(path + '/' + number + '.jpg')

|

img = Image.open(path + '/' + 'Backdrop' + '.jpg')

|

||||||

imgSize = img.size

|

imgSize = img.size

|

||||||

w = img.width

|

w = img.width

|

||||||

h = img.height

|

h = img.height

|

||||||

@@ -208,21 +278,21 @@ def cutImage():

|

|||||||

except:

|

except:

|

||||||

print('[-]Cover cut failed!')

|

print('[-]Cover cut failed!')

|

||||||

else:

|

else:

|

||||||

img = Image.open(path + '/' + number + '.jpg')

|

img = Image.open(path + '/' + 'Backdrop' + '.jpg')

|

||||||

w = img.width

|

w = img.width

|

||||||

h = img.height

|

h = img.height

|

||||||

img.save(path + '/' + number + '.png')

|

img.save(path + '/' + number + '.png')

|

||||||

|

|

||||||

def pasteFileToFolder(filepath, path): #文件路径,番号,后缀,要移动至的位置

|

def pasteFileToFolder(filepath, path): #文件路径,番号,后缀,要移动至的位置

|

||||||

houzhui = str(re.search('[.](AVI|RMVB|WMV|MOV|MP4|MKV|FLV|avi|rmvb|wmv|mov|mp4|mkv|flv)$', filepath).group())

|

houzhui = str(re.search('[.](AVI|RMVB|WMV|MOV|MP4|MKV|FLV|TS|avi|rmvb|wmv|mov|mp4|mkv|flv|ts)$', filepath).group())

|

||||||

os.rename(filepath, number + houzhui)

|

os.rename(filepath, number + houzhui)

|

||||||

shutil.move(number + houzhui, path)

|

shutil.move(number + houzhui, path)

|

||||||

|

|

||||||

if __name__ == '__main__':

|

if __name__ == '__main__':

|

||||||

filepath=argparse_get_file() #影片的路径

|

filepath=argparse_get_file() #影片的路径

|

||||||

|

CreatFailedFolder()

|

||||||

getNumberFromFilename(filepath) #定义番号

|

getNumberFromFilename(filepath) #定义番号

|

||||||

creatFolder() #创建文件夹

|

creatFolder() #创建文件夹

|

||||||

imageDownload(filepath) #creatFoder会返回番号路径

|

imageDownload(filepath) #creatFoder会返回番号路径

|

||||||

PrintFiles(path)#打印文件

|

PrintFiles(path,naming_rule)#打印文件

|

||||||

cutImage() #裁剪图

|

cutImage() #裁剪图

|

||||||

pasteFileToFolder(filepath,path) #移动文件

|

pasteFileToFolder(filepath,path) #移动文件

|

||||||

|

|||||||

@@ -7,7 +7,17 @@ def getTitle(htmlcode): #获取厂商

|

|||||||

#print(htmlcode)

|

#print(htmlcode)

|

||||||

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

||||||

result = str(html.xpath('/html/body/div[2]/div/div[1]/h3/text()')).strip(" ['']")

|

result = str(html.xpath('/html/body/div[2]/div/div[1]/h3/text()')).strip(" ['']")

|

||||||

return result

|

result2 = str(re.sub('\D{2}2-\d+','',result)).replace(' ','',1)

|

||||||

|

#print(result2)

|

||||||

|

return result2

|

||||||

|

def getActor(htmlcode):

|

||||||

|

try:

|

||||||

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

|

result = str(html.xpath('/html/body/div[2]/div/div[1]/h5[5]/a/text()')).strip(" ['']")

|

||||||

|

return result

|

||||||

|

except:

|

||||||

|

return ''

|

||||||

|

|

||||||

def getStudio(htmlcode): #获取厂商

|

def getStudio(htmlcode): #获取厂商

|

||||||

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

||||||

result = str(html.xpath('/html/body/div[2]/div/div[1]/h5[3]/a[1]/text()')).strip(" ['']")

|

result = str(html.xpath('/html/body/div[2]/div/div[1]/h5[3]/a[1]/text()')).strip(" ['']")

|

||||||

@@ -15,44 +25,60 @@ def getStudio(htmlcode): #获取厂商

|

|||||||

def getNum(htmlcode): #获取番号

|

def getNum(htmlcode): #获取番号

|

||||||

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[1]/span[2]/text()')).strip(" ['']")

|

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[1]/span[2]/text()')).strip(" ['']")

|

||||||

|

#print(result)

|

||||||

return result

|

return result

|

||||||

def getRelease(number):

|

def getRelease(htmlcode2): #

|

||||||

a=ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id='+str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-")+'&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

#a=ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id='+str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-")+'&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

||||||

html=etree.fromstring(a,etree.HTMLParser())

|

html=etree.fromstring(htmlcode2,etree.HTMLParser())

|

||||||

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[1]/div/div[2]/dl/dd[4]/text()')).strip(" ['']")

|

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[1]/div/div[2]/dl/dd[4]/text()')).strip(" ['']")

|

||||||

return result

|

return result

|

||||||

def getCover(htmlcode,number): #获取厂商

|

def getCover(htmlcode,number,htmlcode2): #获取厂商 #

|

||||||

a = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id=' + str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-") + '&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

#a = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id=' + str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-") + '&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

||||||

html = etree.fromstring(a, etree.HTMLParser())

|

html = etree.fromstring(htmlcode2, etree.HTMLParser())

|

||||||

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[1]/div/div[1]/a/img/@src')).strip(" ['']")

|

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[1]/div/div[1]/a/img/@src')).strip(" ['']")

|

||||||

return 'http:'+result

|

if result == '':

|

||||||

def getOutline(htmlcode,number): #获取番号

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

a = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id=' + str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-") + '&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

result2 = str(html.xpath('//*[@id="slider"]/ul[1]/li[1]/img/@src')).strip(" ['']")

|

||||||

html = etree.fromstring(a, etree.HTMLParser())

|

return 'http://fc2fans.club' + result2

|

||||||

|

return 'http:' + result

|

||||||

|

|

||||||

|

def getOutline(htmlcode2,number): #获取番号 #

|

||||||

|

#a = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id=' + str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-") + '&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

||||||

|

html = etree.fromstring(htmlcode2, etree.HTMLParser())

|

||||||

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[4]/p/text()')).replace("\\n",'',10000).strip(" ['']").replace("'",'',10000)

|

result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[4]/p/text()')).replace("\\n",'',10000).strip(" ['']").replace("'",'',10000)

|

||||||

return result

|

return result

|

||||||

# def getTag(htmlcode,number): #获取番号

|

def getTag(htmlcode): #获取番号

|

||||||

# a = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id=' + str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-") + '&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

# html = etree.fromstring(a, etree.HTMLParser())

|

result = str(html.xpath('/html/body/div[2]/div/div[1]/h5[4]/a/text()'))

|

||||||

# result = str(html.xpath('//*[@id="container"]/div[1]/div/article/section[4]/p/text()')).replace("\\n",'',10000).strip(" ['']").replace("'",'',10000)

|

return result.strip(" ['']").replace("'",'').replace(' ','')

|

||||||

# return result

|

def getYear(release):

|

||||||

|

try:

|

||||||

|

result = re.search('\d{4}',release).group()

|

||||||

|

return result

|

||||||

|

except:

|

||||||

|

return ''

|

||||||

|

|

||||||

def main(number):

|

def main(number2):

|

||||||

str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-")

|

number=number2.replace('PPV','').replace('ppv','')

|

||||||

|

htmlcode2 = ADC_function.get_html('http://adult.contents.fc2.com/article_search.php?id='+str(number).lstrip("FC2-").lstrip("fc2-").lstrip("fc2_").lstrip("fc2-")+'&utm_source=aff_php&utm_medium=source_code&utm_campaign=from_aff_php')

|

||||||

htmlcode = ADC_function.get_html('http://fc2fans.club/html/FC2-' + number + '.html')

|

htmlcode = ADC_function.get_html('http://fc2fans.club/html/FC2-' + number + '.html')

|

||||||

dic = {

|

dic = {

|

||||||

'title': getTitle(htmlcode),

|

'title': getTitle(htmlcode),

|

||||||

'studio': getStudio(htmlcode),

|

'studio': getStudio(htmlcode),

|

||||||

'year': getRelease(number),

|

'year': '',#str(re.search('\d{4}',getRelease(number)).group()),

|

||||||

'outline': getOutline(htmlcode,number),

|

'outline': getOutline(htmlcode,number),

|

||||||

'runtime': '',

|

'runtime': getYear(getRelease(htmlcode)),

|

||||||

'director': getStudio(htmlcode),

|

'director': getStudio(htmlcode),

|

||||||

'actor': '',

|

'actor': getActor(htmlcode),

|

||||||

'release': getRelease(number),

|

'release': getRelease(number),

|

||||||

'number': number,

|

'number': 'FC2-'+number,

|

||||||

'cover': getCover(htmlcode,number),

|

'cover': getCover(htmlcode,number,htmlcode2),

|

||||||

'imagecut': 0,

|

'imagecut': 0,

|

||||||

'tag':" ",

|

'tag':getTag(htmlcode),

|

||||||

}

|

}

|

||||||

|

#print(getTitle(htmlcode))

|

||||||

|

#print(getNum(htmlcode))

|

||||||

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'),)#.encode('UTF-8')

|

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'),)#.encode('UTF-8')

|

||||||

return js

|

return js

|

||||||

|

|

||||||

|

#print(main('1051725'))

|

||||||

120

javbus.py

120

javbus.py

@@ -9,19 +9,17 @@ from bs4 import BeautifulSoup#need install

|

|||||||

from PIL import Image#need install

|

from PIL import Image#need install

|

||||||

import time

|

import time

|

||||||

import json

|

import json

|

||||||

|

from ADC_function import *

|

||||||

def get_html(url):#网页请求核心

|

import siro

|

||||||

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

|

||||||

getweb = requests.get(str(url),timeout=5,headers=headers).text

|

|

||||||

try:

|

|

||||||

return getweb

|

|

||||||

except:

|

|

||||||

print("[-]Connect Failed! Please check your Proxy.")

|

|

||||||

|

|

||||||

def getTitle(htmlcode): #获取标题

|

def getTitle(htmlcode): #获取标题

|

||||||

doc = pq(htmlcode)

|

doc = pq(htmlcode)

|

||||||

title=str(doc('div.container h3').text()).replace(' ','-')

|

title=str(doc('div.container h3').text()).replace(' ','-')

|

||||||

return title

|

try:

|

||||||

|

title2 = re.sub('n\d+-','',title)

|

||||||

|

return title2

|

||||||

|

except:

|

||||||

|

return title

|

||||||

def getStudio(htmlcode): #获取厂商

|

def getStudio(htmlcode): #获取厂商

|

||||||

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

html = etree.fromstring(htmlcode,etree.HTMLParser())

|

||||||

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[5]/a/text()')).strip(" ['']")

|

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[5]/a/text()')).strip(" ['']")

|

||||||

@@ -34,7 +32,6 @@ def getCover(htmlcode): #获取封面链接

|

|||||||

doc = pq(htmlcode)

|

doc = pq(htmlcode)

|

||||||

image = doc('a.bigImage')

|

image = doc('a.bigImage')

|

||||||

return image.attr('href')

|

return image.attr('href')

|

||||||

print(image.attr('href'))

|

|

||||||

def getRelease(htmlcode): #获取出版日期

|

def getRelease(htmlcode): #获取出版日期

|

||||||

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[2]/text()')).strip(" ['']")

|

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[2]/text()')).strip(" ['']")

|

||||||

@@ -62,8 +59,10 @@ def getOutline(htmlcode): #获取演员

|

|||||||

doc = pq(htmlcode)

|

doc = pq(htmlcode)

|

||||||

result = str(doc('tr td div.mg-b20.lh4 p.mg-b20').text())

|

result = str(doc('tr td div.mg-b20.lh4 p.mg-b20').text())

|

||||||

return result

|

return result

|

||||||

|

def getSerise(htmlcode):

|

||||||

|

html = etree.fromstring(htmlcode, etree.HTMLParser())

|

||||||

|

result = str(html.xpath('/html/body/div[5]/div[1]/div[2]/p[7]/a/text()')).strip(" ['']")

|

||||||

|

return result

|

||||||

def getTag(htmlcode): # 获取演员

|

def getTag(htmlcode): # 获取演员

|

||||||

tag = []

|

tag = []

|

||||||

soup = BeautifulSoup(htmlcode, 'lxml')

|

soup = BeautifulSoup(htmlcode, 'lxml')

|

||||||

@@ -76,10 +75,57 @@ def getTag(htmlcode): # 获取演员

|

|||||||

|

|

||||||

|

|

||||||

def main(number):

|

def main(number):

|

||||||

htmlcode=get_html('https://www.javbus.com/'+number)

|

try:

|

||||||

dww_htmlcode=get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number.replace("-", ''))

|

htmlcode = get_html('https://www.javbus.com/' + number)

|

||||||

|

dww_htmlcode = get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number.replace("-", ''))

|

||||||

|

dic = {

|

||||||

|

'title': str(re.sub('\w+-\d+-', '', getTitle(htmlcode))),

|

||||||

|

'studio': getStudio(htmlcode),

|

||||||

|

'year': str(re.search('\d{4}', getYear(htmlcode)).group()),

|

||||||

|

'outline': getOutline(dww_htmlcode),

|

||||||

|

'runtime': getRuntime(htmlcode),

|

||||||

|

'director': getDirector(htmlcode),

|

||||||

|

'actor': getActor(htmlcode),

|

||||||

|

'release': getRelease(htmlcode),

|

||||||

|

'number': getNum(htmlcode),

|

||||||

|

'cover': getCover(htmlcode),

|

||||||

|

'imagecut': 1,

|

||||||

|

'tag': getTag(htmlcode),

|

||||||

|

'label': getSerise(htmlcode),

|

||||||

|

}

|

||||||

|

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'), ) # .encode('UTF-8')

|

||||||

|

|

||||||

|

if 'HEYZO' in number or 'heyzo' in number or 'Heyzo' in number:

|

||||||

|

htmlcode = get_html('https://www.javbus.com/' + number)

|

||||||

|

dww_htmlcode = get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number.replace("-", ''))

|

||||||

|

dic = {

|

||||||

|

'title': str(re.sub('\w+-\d+-', '', getTitle(htmlcode))),

|

||||||

|

'studio': getStudio(htmlcode),

|

||||||

|

'year': getYear(htmlcode),

|

||||||

|

'outline': getOutline(dww_htmlcode),

|

||||||

|

'runtime': getRuntime(htmlcode),

|

||||||

|

'director': getDirector(htmlcode),

|

||||||

|

'actor': getActor(htmlcode),

|

||||||

|

'release': getRelease(htmlcode),

|

||||||

|

'number': getNum(htmlcode),

|

||||||

|

'cover': getCover(htmlcode),

|

||||||

|

'imagecut': 1,

|

||||||

|

'tag': getTag(htmlcode),

|

||||||

|

'label': getSerise(htmlcode),

|

||||||

|

}

|

||||||

|

js2 = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4,

|

||||||

|

separators=(',', ':'), ) # .encode('UTF-8')

|

||||||

|

return js2

|

||||||

|

return js

|

||||||

|

except:

|

||||||

|

a=siro.main(number)

|

||||||

|

return a

|

||||||

|

|

||||||

|

def main_uncensored(number):

|

||||||

|

htmlcode = get_html('https://www.javbus.com/' + number)

|

||||||

|

dww_htmlcode = get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number.replace("-", ''))

|

||||||

dic = {

|

dic = {

|

||||||

'title': getTitle(htmlcode),

|

'title': str(re.sub('\w+-\d+-','',getTitle(htmlcode))).replace(getNum(htmlcode)+'-',''),

|

||||||

'studio': getStudio(htmlcode),

|

'studio': getStudio(htmlcode),

|

||||||

'year': getYear(htmlcode),

|

'year': getYear(htmlcode),

|

||||||

'outline': getOutline(dww_htmlcode),

|

'outline': getOutline(dww_htmlcode),

|

||||||

@@ -89,44 +135,20 @@ def main(number):

|

|||||||

'release': getRelease(htmlcode),

|

'release': getRelease(htmlcode),

|

||||||

'number': getNum(htmlcode),

|

'number': getNum(htmlcode),

|

||||||

'cover': getCover(htmlcode),

|

'cover': getCover(htmlcode),

|

||||||

'imagecut': 1,

|

|

||||||

'tag':getTag(htmlcode)

|

|

||||||

}

|

|

||||||

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'),)#.encode('UTF-8')

|

|

||||||

return js

|

|

||||||

|

|

||||||

def main_uncensored(number):

|

|

||||||

htmlcode = get_html('https://www.javbus.com/' + number)

|

|

||||||

dww_htmlcode = get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number.replace("-", ''))

|

|

||||||

#print('un')

|

|

||||||

#print('https://www.javbus.com/' + number)

|

|

||||||

dic = {

|

|

||||||

'title': getTitle(htmlcode),

|

|

||||||

'studio': getStudio(htmlcode),

|

|

||||||

'year': getYear(htmlcode),

|

|

||||||

'outline': getOutline(htmlcode),

|

|

||||||

'runtime': getRuntime(htmlcode),

|

|

||||||

'director': getDirector(htmlcode),

|

|

||||||

'actor': getActor(htmlcode),

|

|

||||||

'release': getRelease(htmlcode),

|

|

||||||

'number': getNum(htmlcode),

|

|

||||||

'cover': getCover(htmlcode),

|

|

||||||

'tag': getTag(htmlcode),

|

'tag': getTag(htmlcode),

|

||||||

|

'label': getSerise(htmlcode),

|

||||||

'imagecut': 0,

|

'imagecut': 0,

|

||||||

}

|

}

|

||||||

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'), ) # .encode('UTF-8')

|

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'), ) # .encode('UTF-8')

|

||||||

|

|

||||||

if getYear(htmlcode) == '':

|

if getYear(htmlcode) == '' or getYear(htmlcode) == 'null':

|

||||||

#print('un2')

|

|

||||||

number2 = number.replace('-', '_')

|

number2 = number.replace('-', '_')

|

||||||

htmlcode = get_html('https://www.javbus.com/' + number2)

|

htmlcode = get_html('https://www.javbus.com/' + number2)

|

||||||

#print('https://www.javbus.com/' + number2)

|

dic2 = {

|

||||||

dww_htmlcode = get_html("https://www.dmm.co.jp/mono/dvd/-/detail/=/cid=" + number2.replace("_", ''))

|

'title': str(re.sub('\w+-\d+-','',getTitle(htmlcode))).replace(getNum(htmlcode)+'-',''),

|

||||||

dic = {

|

|

||||||

'title': getTitle(htmlcode),

|

|

||||||

'studio': getStudio(htmlcode),

|

'studio': getStudio(htmlcode),

|

||||||

'year': getYear(htmlcode),

|

'year': getYear(htmlcode),

|

||||||

'outline': getOutline(htmlcode),

|

'outline': '',

|

||||||

'runtime': getRuntime(htmlcode),

|

'runtime': getRuntime(htmlcode),

|

||||||

'director': getDirector(htmlcode),

|

'director': getDirector(htmlcode),

|

||||||

'actor': getActor(htmlcode),

|

'actor': getActor(htmlcode),

|

||||||

@@ -134,13 +156,13 @@ def main_uncensored(number):

|

|||||||

'number': getNum(htmlcode),

|

'number': getNum(htmlcode),

|

||||||

'cover': getCover(htmlcode),

|

'cover': getCover(htmlcode),

|

||||||

'tag': getTag(htmlcode),

|

'tag': getTag(htmlcode),

|

||||||

|

'label':getSerise(htmlcode),

|

||||||

'imagecut': 0,

|

'imagecut': 0,

|

||||||

}

|

}

|

||||||

js = json.dumps(dic, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'), ) # .encode('UTF-8')

|

js2 = json.dumps(dic2, ensure_ascii=False, sort_keys=True, indent=4, separators=(',', ':'), ) # .encode('UTF-8')

|

||||||

#print(js)

|

return js2

|

||||||

return js

|

|

||||||

else:

|

return js

|

||||||

bbb=''

|

|

||||||

|

|

||||||

|

|

||||||

# def return1():

|

# def return1():

|

||||||

|

|||||||

6

proxy.ini

Normal file

6

proxy.ini

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

[proxy]

|

||||||

|

proxy=127.0.0.1:1080

|

||||||

|

|

||||||

|

[Name_Rule]

|

||||||

|

location_rule='JAV_output/'+actor+'/'+number

|

||||||

|

naming_rule=number+'-'+title

|

||||||

106

siro.py

106

siro.py

@@ -3,79 +3,97 @@ from lxml import etree

|

|||||||

import json

|

import json

|

||||||

import requests

|

import requests

|

||||||

from bs4 import BeautifulSoup

|

from bs4 import BeautifulSoup

|

||||||

|

from ADC_function import *

|

||||||

def get_html(url):#网页请求核心

|

|

||||||

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/68.0.3440.106 Safari/537.36'}

|

|

||||||

cookies = {'adc':'1'}

|

|

||||||

getweb = requests.get(str(url),timeout=5,cookies=cookies,headers=headers).text

|

|

||||||

try:

|

|

||||||

return getweb

|

|

||||||

except:

|

|

||||||

print("[-]Connect Failed! Please check your Proxy.")

|

|

||||||

|

|

||||||

def getTitle(a):

|

def getTitle(a):

|

||||||

html = etree.fromstring(a, etree.HTMLParser())

|

html = etree.fromstring(a, etree.HTMLParser())

|

||||||

result = str(html.xpath('//*[@id="center_column"]/div[2]/h1/text()')).strip(" ['']")

|

result = str(html.xpath('//*[@id="center_column"]/div[2]/h1/text()')).strip(" ['']")

|

||||||

return result

|

return result.replace('/',',')

|

||||||

def getActor(a): #//*[@id="center_column"]/div[2]/div[1]/div/table/tbody/tr[1]/td/text()

|

def getActor(a): #//*[@id="center_column"]/div[2]/div[1]/div/table/tbody/tr[1]/td/text()

|

||||||